2006 : WHAT IS YOUR DANGEROUS IDEA?

When most people think of torturers, stalkers, robbers, rapists, and murderers, they imagine crazed drooling monsters with maniacal Charles Manson-like eyes. The calm normal-looking image starring back at you from the bathroom mirror reflects a truer representation. The dangerous idea is that all of us contain within our large brains adaptations whose functions are to commit despicable atrocities against our fellow humans — atrocities most would label evil.

The unfortunate fact is that killing has proved to be an effective solution to an array of adaptive problems in the ruthless evolutionary games of survival and reproductive competition: Preventing injury, rape, or death; protecting one's children; eliminating a crucial antagonist; acquiring a rival's resources; securing sexual access to a competitor's mate; preventing an interloper from appropriating one's own mate; and protecting vital resources needed for reproduction.

The idea that evil has evolved is dangerous on several counts. If our brains contain psychological circuits that can trigger murder, genocide, and other forms of malevolence, then perhaps we can't hold those who commit carnage responsible: "It's not my client's fault, your honor, his evolved homicide adaptations made him do it." Understanding causality, however, does not exonerate murderers, whether the tributaries trace back to human evolution history or to modern exposure to alcoholic mothers, violent fathers, or the ills of bullying, poverty, drugs, or computer games. It would be dangerous if the theory of the evolved murderous mind were misused to let killers free.

The evolution of evil is dangerous for a more disconcerting reason. We like to believe that evil can be objectively located in a particular set of evil deeds, or within the subset people who perpetrate horrors on others, regardless of the perspective of the perpetrator or victim. That is not the case. The perspective of the perpetrator and victim differ profoundly. Many view killing a member of one's in-group, for example, to be evil, but take a different view of killing those in the out-group. Some people point to the biblical commandment "thou shalt not kill" as an absolute. Closer biblical inspection reveals that this injunction applied only to murder within one's group.

Conflict with terrorists provides a modern example. Osama bin Laden declared: "The ruling to kill the Americans and their allies — civilians and military — is an individual duty for every Muslim who can do it in any country in which it is possible to do it." What is evil from the perspective of an American who is a potential victim is an act of responsibility and higher moral good from the terrorist's perspective. Similarly, when President Bush identified an "axis of evil," he rendered it moral for Americans to kill those falling under that axis — a judgment undoubtedly considered evil by those whose lives have become imperiled.

At a rough approximation, we view as evil people who inflict massive evolutionary fitness costs on us, our families, or our allies. No one summarized these fitness costs better than the feared conqueror Genghis Khan (1167-1227): "The greatest pleasure is to vanquish your enemies, to chase them before you, to rob them of their wealth, to see their near and dear bathed in tears, to ride their horses and sleep on the bellies of their wives and daughters."

We can be sure that the families of the victims of Genghis Khan saw him as evil. We can be just as sure that his many sons, whose harems he filled with women of the conquered groups, saw him as a venerated benefactor. In modern times, we react with horror at Mr. Khan describing the deep psychological satisfaction he gained from inflicting fitness costs on victims while purloining fitness fruits for himself. But it is sobering to realize that perhaps half a percent of the world's population today are descendants of Genghis Khan.

On reflection, the dangerous idea may not be that murder historically has been advantageous to the reproductive success of killers; nor that we all house homicidal circuits within our brains; nor even that all of us are lineal descendants of ancestors who murdered. The danger comes from people who refuse to recognize that there are dark sides of human nature that cannot be wished away by attributing them to the modern ills of culture, poverty, pathology, or exposure to media violence. The danger comes from failing to gaze into the mirror and come to grips the capacity for evil in all of us.

An idea that would be "dangerous if true" is what Francis Crick referred to as "the astonishing hypothesis"; the notion that our conscious experience and sense of self is based entirely on the activity of a hundred billion bits of jelly—the neurons that constitute the brain. We take this for granted in these enlightened times, but even so, it never ceases to amaze me.

Some scholars have criticized Crick's tongue-in-cheek phrase (and title of his book) on the grounds that the hypothesis he refers to is "neither astonishing nor a hypothesis." (since we already know it to be true). Yet the far-reaching philosophical, moral and ethical dilemmas posed by his hypothesis have not been recognized widely enough. It is in many ways the ultimate dangerous idea.

Let's put this in historical perspective.

Freud once pointed out that the history of ideas in the last few centuries has been punctuated by "revolutions," major upheavals of thought that have forever altered our view of ourselves and our place in the cosmos.

First, there was the Copernican system dethroning the earth as the center of the cosmos.

Second, was the Darwinian revolution; the idea that far from being the climax of "intelligent design" we are merely neotonous apes that happen to be slightly cleverer than our cousins.

Third, the Freudian view that even though you claim to be "in charge" of your life, your behavior is in fact governed by a cauldron of drives and motives of which you are largely unconscious.

And fourth, the discovery of DNA and the genetic code with its implication (to quote James Watson) that "There are only molecules. Everything else is sociology."

To this list we can now add the fifth, the "neuroscience revolution" and its corollary pointed out by Crick—the "astonishing hypothesis"—that even our loftiest thoughts and aspirations are mere byproducts of neural activity. We are nothing but a pack of neurons.

If all this seems dehumanizing, you haven't seen anything yet.

Our brains are constantly subjected to the demands of multi-tasking and a seemingly endless cacophony of information from diverse sources. Cell phones, emails, computers, and cable television are omnipresent, not to mention such archaic venues as books, newspapers and magazines.

This induces an unrelenting barrage of neuronal activity that in turn produces long-lasting structural modification in virtually all compartments of the nervous system. A fledging industry touts the virtues of exercising your brain for self-improvement. Programs are offered for how to make virtually any region of your neocortex a more efficient processor. Parents are urged to begin such regimes in preschool children and adults are told to take advantage of their brain's plastic properties for professional advancement. The evidence documenting the veracity for such claims is still outstanding, but one thing is clear. Even if brain exercise does work, the subsequent waves of neuronal activities stemming from simply living a modern lifestyle are likely to eradicate the presumed hard-earned benefits of brain exercise.

My dangerous idea is that what's needed to attain optimal brain performance — with or without prior brain exercise — is a 24-hour period of absolute solitude. By absolute solitude I mean no verbal interactions of any kind (written or spoken, live or recorded) with another human being. I would venture that a significantly higher proportion of people reading these words have tried skydiving than experienced one day of absolute solitude.

What to do to fill the waking hours? That's a question that each person would need to answer for him/herself. Unless you've spent time in a monastery or in solitary confinement it's unlikely that you've had to deal with this issue. The only activity not proscribed is thinking. Imagine if everyone in this country had the opportunity to do nothing but engage in uninterrupted thought for one full day a year!

A national day of absolute solitude would do more to improve the brains of all Americans than any other one-day program. (I leave it to the lawmakers to figure out a plan for implementing this proposal.)The danger stems from the fact that a 24 period for uninterrupted thinking could cause irrevocable upheavals in much of what our society currently holds sacred.But whether that would improve our present state of affairs cannot be guaranteed.

All this will soon undergo a revolutionary transformation. The rate of change of DNA sequencing technology is continuing at an exponential pace. We are approaching the time when we will go from having a few human genome sequences to complex databases containing first tens, to hundreds of thousands, of complete genomes, then millions. Within a decade we will begin rapidly accumulating the complete genetic code of humans along with the phenotypic repertoire of the same individuals. By performing multifactorial analysis of the DNA sequence variations, together with the comprehensive phenotypic information gleaned from every branch of human investigatory discipline, for the first time in history, we will be able to provide answers to quantitatively questions of what is genetic versus what is due to the environment. This is already taking place in cancer research where we can measure the differences in genetic mutations inherited from our parents versus those acquired over our lives from environmental damage. This good news will help transform the treatment of cancer by allowing us to know which proteins need to be targeted.

However, when these new powerful computers and databases are used to help us analyze who we are as humans, will society at large, largely ignorant and afraid of science, be ready for the answers we are likely to get?

For example, we know from experiments on fruit flies that there are genes that control many behaviors, including sexual activity. We sequenced the dog genome a couple of years ago and now an additional breed has had its genome decoded. The canine world offers a unique look into the genetic basis of behavior. The large number of distinct dog breeds originated from the wolf genome by selective breeding, yet each breed retains only subsets of the wolf behavior spectrum. We know that there is a genetic basis not only of the appearance of the breeds with 30-fold difference in weight and 6-fold in height but in their inherited actions. For example border collies can use the power of their stare to herd sheep instead of freezing them in place prior to devouring them.

We attribute behaviors in other mammalian species to genes and genetics but when it comes to humans we seem to like the notion that we are all created equal, or that each child is a "blank slate". As we obtain the sequences of more and more mammalian genomes including more human sequences, together with basic observations and some common sense, we will be forced to turn away from the politically correct interpretations, as our new genomic tool sets provide the means to allow us to begin to sort out the reality about nature or nurture. In other words, we are at the threshold of a realistic biology of humankind.

It will inevitably be revealed that there are strong genetic components associated with most aspects of what we attribute to human existence including personality subtypes, language capabilities, mechanical abilities, intelligence, sexual activities and preferences, intuitive thinking, quality of memory, will power, temperament, athletic abilities, etc. We will find unique manifestations of human activity linked to genetics associated with isolated and/or inbred populations.

The danger rests with what we already know: that we are not all created equal. Further danger comes with our ability to quantify and measure the genetic side of the equation before we can fully understand the much more difficult task of evaluating environmental components of human existence. The genetic determinists will appear to be winning again, but we cannot let them forget the range of potential of human achievement with our limiting genetic repertoire.

Public opinion surveys (at least in the UK) reveal a generally positive attitude to science. However, this is coupled with widespread worry that science may be 'running out of control'. This latter idea is, I think, a dangerous one, because if widely believed it could be self-fulfilling.

In the 21st century, technology will change the world faster than ever — the global environment, our lifestyles, even human nature itself. We are far more empowered by science than any previous generation was: it offers immense potential — especially for the developing world — but there could be catastrophic downsides. We are living in the first century when the greatest risks come from human actions rather than from nature.

Almost any scientific discovery has a potential for evil as well as for good; its applications can be channelled either way, depending on our personal and political choices; we can't accept the benefits without also confronting the risks. The decisions that we make, individually and collectively, will determine whether the outcomes of 21st century sciences are benign or devastating. But there's' a real danger that that, rather than campaigning energetically for optimum policies, we'll be lulled into inaction by a feeling of fatalism — a belief that science is advancing so fast, and is so much influenced by commercial and political pressures, that nothing we can do makes any difference.

The present share-out of resources and effort between different sciences is the outcome of a complicated 'tension' between many extraneous factors. And the balance is suboptimal. This seems so whether we judge in purely intellectual terms, or take account of likely benefit to human welfare. Some subjects have had the 'inside track' and gained disproportionate resources. Others, such as environmental researches, renewable energy sources, biodiversity studies and so forth, deserve more effort. Within medical research the focus is disproportionately on cancer and cardiovascular studies, the ailments that loom largest in prosperous countries, rather than on the infectious diseases endemic in the tropics.

Choices on how science is applied — to medicine, the environment, and so forth — should be the outcome of debate extending way beyond the scientific community. Far more research and development can be done than we actually want or can afford to do; and there are many applications of science that we should consciously eschew.

Even if all the world's scientific academies agreed that a specific type of research had a specially disquieting net 'downside' and all countries, in unison, imposed a ban, what is the chance that it could be enforced effectively enough? In view of the failure to control drug smuggling or homicides, it is unrealistic to expect that, when the genie is out of the bottle, we can ever be fully secure against the misuse of science. And in our ever more interconnected world, commercial pressure are harder to control and regulate. The challenges and difficulties of 'controlling' science in this century will indeed be daunting.

Cynics would go further, and say that anything that is scientifically and technically possible will be done — somewhere, sometime — despite ethical and prudential objections, and whatever the regulatory regime. Whether this idea is true or false, it's an exceedingly dangerous one, because it's engenders despairing pessimism, and demotivates efforts to secure a safer and fairer world. The future will best be safeguarded — and science has the best chance of being applied optimally — through the efforts of people who are less fatalistic.

Any challenge to the normal probability bell curve can have far-reaching consequences because a great deal of modern science and engineering rests on this special bell curve. Most of the standard hypothesis tests in statistics rely on the normal bell curve either directly or indirectly. These tests permeate the social and medical sciences and underlie the poll results in the media. Related tests and assumptions underlie the decision algorithms in radar and cell phones that decide whether the incoming energy blip is a 0 or a 1. Management gurus exhort manufacturers to follow the "six sigma" creed of reducing the variance in products to only two or three defective products per million in accord with "sigmas" or standard deviations from the mean of a normal bell curve. Models for trading stock and bond derivatives assume an underlying normal bell-curve structure. Even quantum and signal-processing uncertainty principles or inequalities involve the normal bell curve as the equality condition for minimum uncertainty. Deviating even slightly from the normal bell curve can sometimes produce qualitatively different results.

The proposed dangerous idea stems from two facts about the normal bell curve.

First: The normal bell curve is not the only bell curve. There are at least as many different bell curves as there are real numbers. This simple mathematical fact poses at once a grammatical challenge to the title of Charles Murray's IQ book The Bell Curve. Murray should have used the indefinite article "A" instead of the definite article "The." This is but one of many examples that suggest that most scientists simply equate the entire infinite set of probability bell curves with the normal bell curve of textbooks. Nature need not share the same practice. Human and non-human behavior can be far more diverse than the classical normal bell curve allows.

Second: The normal bell curve is a skinny bell curve. It puts most of its probability mass in the main lobe or bell while the tails quickly taper off exponentially. So "tail events" appear rare simply as an artifact of this bell curve's mathematical structure. This limitation may be fine for approximate descriptions of "normal" behavior near the center of the distribution. But it largely rules out or marginalizes the wide range of phenomena that take place in the tails.

Again most bell curves have thick tails. Rare events are not so rare if the bell curve has thicker tails than the normal bell curve has. Telephone interrupts are more frequent. Lightning flashes are more frequent and more energetic. Stock market fluctuations or crashes are more frequent. How much more frequent they are depends on how thick the tail is — and that is always an empirical question of fact. Neither logic nor assume-the-normal-curve habit can answer the question. Instead scientists need to carry their evidentiary burden a step further and apply one of the many available statistical tests to determine and distinguish the bell-curve thickness.

One response to this call for tail-thickness sensitivity is that logic alone can decide the matter because of the so-called central limit theorem of classical probability theory. This important "central" result states that some suitably normalized sums of random terms will converge to a standard normal random variable and thus have a normal bell curve in the limit. So Gauss and a lot of other long-dead mathematicians got it right after all and thus we can continue to assume normal bell curves with impunity.

That argument fails in general for two reasons.

The first reason it fails is that the classical central limit theorem result rests on a critical assumption that need not hold and that often does not hold in practice. The theorem assumes that the random dispersion about the mean is so comparatively slight that a particular measure of this dispersion — the variance or the standard deviation — is finite or does not blow up to infinity in a mathematical sense. Most bell curves have infinite or undefined variance even though they have a finite dispersion about their center point. The error is not in the bell curves but in the two-hundred-year-old assumption that variance equals dispersion. It does not in general. Variance is a convenient but artificial and non-robust measure of dispersion. It tends to overweight "outliers" in the tail regions because the variance squares the underlying errors between the values and the mean. Such squared errors simplify the math but produce the infinite effects. These effects do not appear in the classical central limit theorem because the theorem assumes them away.

The second reason the argument fails is that the central limit theorem itself is just a special case of a more general result called the generalized central limit theorem. The generalized central limit theorem yields convergence to thick-tailed bell curves in the general case. Indeed it yields convergence to the thin-tailed normal bell curve only in the special case of finite variances. These general cases define the infinite set of the so-called stable probability distributions and their symmetric versions are bell curves. There are still other types of thick-tailed bell curves (such as the Laplace bell curves used in image processing and elsewhere) but the stable bell curves are the best known and have several nice mathematical properties. The figure below shows the normal or Gaussian bell curve superimposed over three thicker-tailed stable bell curves. The catch in working with stable bell curves is that their mathematics can be nearly intractable. So far we have closed-form solutions for only two stable bell curves (the normal or Gaussian and the very-thick-tailed Cauchy curve) and so we have to use transform and computer techniques to generate the rest. Still the exponential growth in computing power has long since made stable or thick-tailed analysis practical for many problems of science and engineering.

This last point shows how competing bell curves offer a new context for judging whether a given set of data reasonably obey a normal bell curve. One of the most popular eye-ball tests for normality is the PP or probability plot of the data. The data should almost perfectly fit a straight line if the data come from a normal probability distribution. But this seldom happens in practice. Instead real data snake all around the ideal straight line in a PP diagram. So it is easy for the user to shrug and a call any data deviation from the ideal line good enough in the absence of a direct bell-curve competitor. A fairer test is to compare the normal PP plot with the best-fitting thick-tailed or stable PP plot. The data may well line up better in a thick-tailed PP diagram than it does in the usual normal PP diagram. This test evidence would reject the normal bell-curve hypothesis in favor of the thicker-tailed alternative. Ignoring these thick-tailed alternatives favors accepting the less-accurate normal bell curve and thus leads to underestimating the occurrence of tail events.

Stable or thick-tailed probability curves continue to turn up as more scientists and engineers search for them. They tend to accurately model impulsive phenomena such as noise in telephone lines or in the atmosphere or in fluctuating economic assets. Skewed versions appear to best fit the data for the Ethernet traffic in bit packets. Here again the search is ultimately an empirical one for the best-fitting tail thickness. Similar searches will only increase as the math and software of thick-tailed bell curves work their way into textbooks on elementary probability and statistics. Much of it is already freely available on the Internet.

Thicker-tail bell curves also imply that there is not just a single form of pure white noise. Here too there are at least as many forms of white noise (or any colored noise) as there are real numbers. Whiteness just means that the noise spikes or hisses and pops are independent in time or that they do not correlate with one another. The noise spikes themselves can come from any probability distribution and in particular they can come from any stable or thick-tailed bell curve. The figure below shows the normal or Gaussian bell curve and three kindred thicker-tailed bell curves and samples of their corresponding white noise. The normal curve has the upper-bound alpha parameter of 2 while the thicker-tailed curves have lower values — tail thickness increases as the alpha parameter falls. The white noise from the thicker-tailed bell curves becomes much more impulsive as their bell narrows and their tails thicken because then more extreme events or noise spikes occur with greater frequency.

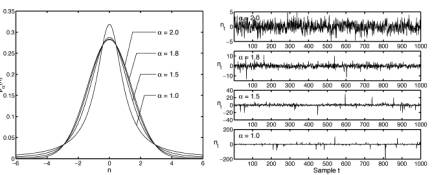

Competing bell curves: The figure on the left shows four superimposed symmetric alpha-stable bell curves with different tail thicknesses while the plots on the right show samples of their corresponding forms of white noise. The parameter  describes the thickness of a stable bell curve and ranges from 0 to 2. Tails grow thicker as

describes the thickness of a stable bell curve and ranges from 0 to 2. Tails grow thicker as  grows smaller. The white noise grows more impulsive as the tails grow thicker. The Gaussian or normal bell curve

grows smaller. The white noise grows more impulsive as the tails grow thicker. The Gaussian or normal bell curve has the thinnest tail of the four stable curves while the Cauchy bell curve

has the thinnest tail of the four stable curves while the Cauchy bell curve  has the thickest tails and thus the most impulsive noise. Note the different magnitude scales on the vertical axes. All the bell curves have finite dispersion while only the Gaussian or normal bell curve has a finite variance or finite standard deviation.

has the thickest tails and thus the most impulsive noise. Note the different magnitude scales on the vertical axes. All the bell curves have finite dispersion while only the Gaussian or normal bell curve has a finite variance or finite standard deviation.

My colleagues and I have recently shown that most mathematical models of spiking neurons in the retina can not only benefit from small amounts of added noise by increasing their Shannon bit count but they still continue to benefit from added thick-tailed or "infinite-variance" noise. The same result holds experimentally for a carbon nanotube transistor that detects signals in the presence of added electrical noise.

Thick-tailed bell curves further call into question what counts as a statistical "outlier" or bad data: Is a tail datum error or pattern? The line between extreme and non-extreme data is not just fuzzy but depends crucially on the underlying tail thickness.

The usual rule of thumb is that the data is suspect if it lies outside three or even two standard deviations from the mean. Such rules of thumb reflect both the tacit assumption that dispersion equals variance and the classical central-limit effect that large data sets are not just approximately bell curves but approximately thin-tailed normal bell curves. An empirical test of the tails may well justify the latter thin-tailed assumption in many cases. But the mere assertion of the normal bell curve does not. So "rare" events may not be so rare after all.

With each meticulous turn of the screw in science, with each tightening up of our understanding of the natural world, we pull more taut the straps over God's muzzle. From botany to bioengineering, from physics to psychology, what is science really but true Revelation — and what is Revelation but the negation of God? It is a humble pursuit we scientists engage in: racing to reality. Many of us suffer the harsh glare of the American theocracy, whose heart still beats loud and strong in this new year of the 21st century. We bravely favor truth, in all its wondrous, amoral, and 'meaningless' complexity over the singularly destructive Truth born of the trembling minds of our ancestors. But my dangerous idea, I fear, is that no matter how far our thoughts shall vault into the eternal sky of scientific progress, no matter how dazzling the effects of this progress, God will always bite through his muzzle and banish us from the starry night of humanistic ideals.

Science is an endless series of binding and rebinding his breath; there will never be a day when God does not speak for the majority. There will never be a day even when he does not whisper in the most godless of scientists' ears. This is because God is not an idea, nor a cultural invention, not an 'opiate of the masses' or any such thing; God is a way of thinking that was rendered permanent by natural selection.

As scientists, we must toil and labor and toil again to silence God, but ultimately this is like cutting off our ears to hear more clearly. God too is a biological appendage; until we acknowledge this fact for what it is, until we rear our children with this knowledge, he will continue to howl his discontent for all of time.

The more we discover about cognition and the brain, the more we will realize that education as we know it does not accomplish what we believe it does.

It is not my purpose to echo familiar critiques of our schools. My concerns are of a different nature and apply to the full spectrum of education, including our institutions of higher education, which arguably are the finest in the world.

Our understanding of the intersection between genetics and neuroscience (and their behavioral correlates) is still in its infancy. This century will bring forth an explosion of new knowledge on the genetic and environmental determinants of cognition and brain development, on what and how we learn, on the neural basis of human interaction in social and political contexts, and on variability across people.

Are we prepared to transform our educational institutions if new science challenges cherished notions of what and how we learn? As we acquire the ability to trace genetic and environmental influences on the development of the brain, will we as a society be able to agree on what our educational objectives should be?

Since the advent of scientific psychology we have learned a lot about learning. In the years ahead we will learn a lot more that will continue to challenge our current assumptions. We will learn that some things we currently assume are learnable are not (and vice versa), that some things that are learned successfully don't have the impact on future thinking and behavior that we imagine, and that some of the learning that impacts future thinking and behavior is not what we spend time teaching. We might well discover that the developmental time course for optimal learning from infancy through the life span is not reflected in the standard educational time line around which society is organized. As we discover more about the gulf between how we learn and how we teach, hopefully we will also discover ways to redesign our systems — but I suspect that the latter will lag behind the former.

Our institutions of education certify the mastery of spheres of knowledge valued by society. Several questions will become increasingly pressing, and are even pertinent today. How much of this learning persists beyond the time at which acquisition is certified? How does this learning impact the lives of our students? How central is it in shaping the thinking and behavior we would like to see among educated people as they navigate, negotiate and lead in an increasingly complex world?

We know that tests and admissions processes are selection devices that sort people into cohorts on the basis of excellence on various dimensions. We know less about how much even our finest examples of teaching contribute to human development over and above selection and motivation.

Even current knowledge about cognition (specifically, our understanding of active learning, memory, attention, and implicit learning) has not fully penetrated our educational practices, because of inertia as well as a natural lag in the application of basic research. For example, educators recognize that active learning is superior to the passive transmission of knowledge. Yet we have a long way to go to adapt our educational practices to what we already know about active learning.

We know from research on memory that learning trials bunched up in time produce less long term retention than the same learning trials spread over time. Yet we compress learning into discrete packets called courses, we test learning at the end of a course of study, and then we move on. Furthermore, memory for both facts and methods of analytic reasoning are context-dependent. We don't know how much of this learning endures, how well it transfers to contexts different from the ones in which the learning occurred, or how it influences future thinking.

At any given time we attend to only a tiny subset of the information in our brains or impinging on our senses. We know from research on attention that information is processed differently by the brain depending upon whether or not it is attended, and that many factors — endogenous and exogenous — control our attention. Educators have been aware of the role of attention in learning, but we are still far from understanding how to incorporate this knowledge into educational design. Moreover, new information presented in a learning situation is interpreted and encoded in terms of prior knowledge and experience; the increasingly diverse backgrounds of students placed in the same learning contexts implies that the same information may vary in its meaningfulness to different students and may be recalled differently.

Most of our learning is implicit, acquired automatically and unconsciously from interactions with the physical and social environment. Yet language — and hence explicit, declarative or consciously articulated knowledge — is the currency of formal education.

Social psychologists know that what we say about why we think and act as we do is but the tip of a largely unconscious iceberg that drives our attitudes and our behavior. Even as cognitive and social neuroscience reveals the structure of these icebergs under the surface of consciousness (for example, persistent cognitive illusions, decision biases and perceptual biases to which even the best educated can be unwitting victims), it will be less clear how to shape or redirect these knowledge icebergs under the surface of consciousness.

Research in social cognition shows clearly that racial, cultural and other social biases get encoded automatically by internalizing stereotypes and cultural norms. While we might learn about this research in college, we aren't sure how to counteract these factors in the very minds that have acquired this knowledge.

We are well aware of the power of non-verbal auditory and visual information, which when amplified by electronic media capture the attention of our students and sway millions. Future research should give us a better understanding of nuanced non-verbal forms of communication, including their universal and culturally based aspects, as they are manifest in social, political and artistic contexts.

Even the acquisition of declarative knowledge through language — the traditional domain of education — is being usurped by the internet at our finger tips. Our university libraries and publication models are responding to the opportunities and challenges of the information age. But we will need to rethink some of our methods of instruction too. Will our efforts at teaching be drowned out by information from sources more powerful than even the best classroom teacher?

It is only a matter of time before we have brain-related technologies that can alter or supplement cognition, influence what and how we learn, and increase competition for our limited attention. Imagine the challenges for institutions of education in an environment in which these technologies are readily available, for better or worse.

The brain is a complex organ, and we will discover more of this complexity. Our physical, social and information environments are also complex and are becoming more so through globalization and advances in technology. There will be no simple design principles for how we structure education in response to these complexities.

As elite colleges and universities, we see increasing demand for the branding we confer, but we will also see greater scrutiny from society for the education we deliver. Those of us in positions of academic leadership will need wisdom and courage to examine, transform and justify our objectives and methods as educators.

In all times and in all places there has been too much government. We now know what prosperity is: it is the gradual extension of the division of labour through the free exchange of goods and ideas, and the consequent introduction of efficiencies by the invention of new technologies. This is the process that has given us health, wealth and wisdom on a scale unimagined by our ancestors. It not only raises material standards of living, it also fuels social integration, fairness and charity. It has never failed yet. No society has grown poorer or more unequal through trade, exchange and invention. Think of pre-Ming as opposed to Ming China, seventeenth century Holland as opposed to imperial Spain, eighteenth century England as opposed to Louis XIV's France, twentieth century America as opposed to Stalin's Russia, or post-war Japan, Hong Kong and Korea as opposed to Ghana, Cuba and Argentina. Think of the Phoenicians as opposed to the Egyptians, Athens as opposed to Sparta, the Hanseatic League as opposed to the Roman Empire. In every case, weak or decentralised government, but strong free trade led to surges in prosperity for all, whereas strong, central government led to parasitic, tax-fed officialdom, a stifling of innovation, relative economic decline and usually war.

Take Rome. It prospered because it was a free trade zone. But it repeatedly invested the proceeds of that prosperity in too much government and so wasted it in luxury, war, gladiators and public monuments. The Roman empire's list of innovations is derisory, even compared with that of the 'dark ages' that followed.

In every age and at every time there have been people who say we need more regulation, more government. Sometimes, they say we need it to protect exchange from corruption, to set the standards and police the rules, in which case they have a point, though often they exaggerate it. Self-policing standards and rules were developed by free-trading merchants in medieval Europe long before they were taken over and codified as laws (and often corrupted) by monarchs and governments.

Sometimes, they say we need it to protect the weak, the victims of technological change or trade flows. But throughout history such intervention, though well meant, has usually proved misguided — because its progenitors refuse to believe in (or find out about) David Ricardo's Law of Comparative Advantage: even if China is better at making everything than France, there will still be a million things it pays China to buy from France rather than make itself. Why? Because rather than invent, say, luxury goods or insurance services itself, China will find it pays to make more T shirts and use the proceeds to import luxury goods and insurance.

Government is a very dangerous toy. It is used to fight wars, impose ideologies and enrich rulers. True, nowadays, our leaders do not enrich themselves (at least not on the scale of the Sun King), but they enrich their clients: they preside over vast and insatiable parasitic bureaucracies that grow by Parkinson's Law and live off true wealth creators such as traders and inventors.

Sure, it is possible to have too little government. Only, that has not been the world's problem for millennia. After the century of Mao, Hitler and Stalin, can anybody really say that the risk of too little government is greater than the risk of too much? The dangerous idea we all need to learn is that the more we limit the growth of government, the better off we will all be.

This turns out to be true in cases where there are collapses in consensus that have serious societal consequences. Whether in relation to climate change, GM crops or the UK's triple vaccine for measles, mumps and rubella, alternative science networks develop amongst people who are neither ignorant nor irrational, but have perceptions about science, the scientific literature and its implications that differ from those prevailing in the scientific community. These perceptions and discussions may be half-baked, but are no less powerful for all that, and carry influence on the internet and in the media. Researchers and governments haven't yet learned how to respond to such "citizen's science". Should they stop explaining and engaging? No. But they need also to understand better the influences at work within such networks — often too dismissively stereotyped — at an early stage in the debate in order to counter bad science and minimize the impacts of falsehoods.

Great art makes itself vulnerable to interpretation, which is one reason that it keeps being stimulating and fascinating for generations. The problem inherent in this is that art could inspire malevolent behavior, as per the notion popularly expressed by A Clockwork Orange. When I was young, aspiring to be a conceptual artist, it disturbed me greatly that I couldn't control the interpretation of my work. When I began painting, it was even worse; even I wasn't completely sure of what my art meant. That seemed dangerous for me, personally, at that time. I gradually came not only to respect the complexity and inscrutability of painting and art, but to see how it empowers the object. I believe that works of art are animated by their creators, and remain able to generate thoughts, feelings, responses. However, the fact is that the exact effect of art can't be controlled or fully anticipated.

What some individuals consider a sacrosanct ability to perceive moral truths may instead be a hodgepodge of simpler psychological mechanisms, some of which have evolved for other purposes.

It is increasingly apparent that our moral sense comprises a fairly loose collection of intuitions, rules of thumb, and emotional responses that may have emerged to serve a variety of functions, some of which originally had nothing at all to do with ethics. These mechanisms, when tossed in with our general ability to reason, seem to be how humans come to answer the question of good and evil, right and wrong. Intuitions about action, intentionality, and control, for instance, figure heavily into our perception of what constitutes an immoral act. The emotional reactions of empathy and disgust likewise figure into our judgments of who deserves moral protection and who doesn't. But the ability to perceive intentions probably didn't evolve as a way to determine who deserves moral blame. And the emotion of disgust most likely evolved to keep us safe from rotten meat and feces, not to provide information about who deserves moral protection.

Discarding the belief that our moral sense provides a royal road to moral truth is an uncomfortable notion. Most people, after all, are moral realists. They believe acts are objectively right or wrong, like math problems. The dangerous idea is that our intuitions may be poor guides to moral truth, and can easily lead us astray in our everyday moral decisions.

I am not concerned here with the radical claim that personal identity, free will, and consciousness do not exist. Regardless of its merit, this position is so intuitively outlandish that nobody but a philosopher could take it seriously, and so it is unlikely to have any real-world implications, dangerous or otherwise.

Instead I am interested in the milder position that mental life has a purely material basis. The dangerous idea, then, is that Cartesian dualism is false. If what you mean by "soul" is something immaterial and immortal, something that exists independently of the brain, then souls do not exist. This is old hat for most psychologists and philosophers, the stuff of introductory lectures. But the rejection of the immaterial soul is unintuitive, unpopular, and, for some people, downright repulsive.

In the journal "First Things", Patrick Lee and Robert P. George

outline some worries from a religious perspective.

"If science did show that all human acts, including conceptual thought and free choice, are just brain processes,... it would mean that the difference between human beings and other animals is only superficial-a difference of degree rather than a difference in kind; it would mean that human beings lack any special dignity worthy of special respect. Thus, it would undermine the norms that forbid killing and eating human beings as we kill and eat chickens, or enslaving them and treating them as beasts of burden as we do horses or oxen."

The conclusions don't follow. Even if there are no souls, humans might differ from non-human animals in some other way, perhaps with regard to the capacity for language or abstract reasoning or emotional suffering. And even if there were no difference, it would hardly give us license to do terrible things to human beings. Instead, as Peter Singer and others have argued, it should make us kinder to non-human animals. If a chimpanzee turned out to possess the intelligence and emotions of a human child, for instance, most of us would agree that it would be wrong to eat, kill, or enslave it.

Still, Lee and George are right to worry that giving up on the soul means giving up on a priori distinction between humans and other creatures, something which has very real consequences. It would affect as well how we think about stem-cell research and abortion, euthenasia, cloning, and cosmetic psychopharmacology. It would have substantial implications for the legal realm — a belief in immaterial souls has led otherwise sophisticated commentators to defend a distinction between actions that we do and actions that our brains do. We are responsible only for the former, motivating the excuse that Michael Gazzaniga has called, "My brain made me do it." It has been proposed, for instance, that if a pedophile's brain shows a certain pattern of activation while contemplating sex with a child, he should not be viewed as fully responsible for his actions. When you give up on the soul, and accept that all actions correspond to brain activity, this sort of reasoning goes out the window.

The rejection of souls is more dangerous than the idea that kept us so occupied in 2005 — evolution by natural selection. The battle between evolution and creationism is important for many reasons; it is

where science takes a stand against superstition. But, like the origin of the universe, the origin of the species is an issue of great intellectual importance and little practical relevance. If everyone were to become a sophisticated Darwinian, our everyday lives would change very little. In contrast, the widespread rejection of the soul would have profound moral and legal consequences. It would also require people to rethink what happens when they die, and give up the idea (held by about 90% of Americans) that their souls will survive the death of their bodies and ascend to heaven. It is hard to get more dangerous than that.

Some countries, including the United States and Australia, have been in denial about global warming. They cast doubt on the science that set alarm bells ringing. Other countries, such as the UK, are in panic, and want to make drastic cuts in greenhouse emissions. Both stances are irrelevant, because the fight is a hopeless one anyway. In spite of the recent hike in the price of oil, the stuff is still cheap enough to burn. Human nature being what it is, people will go on burning it until it starts running out and simple economics puts the brakes on. Meanwhile the carbon dioxide levels in the atmosphere will just go on rising. Even if developed countries rein in their profligate use of fossil fuels, the emerging Asian giants of China and India will more than make up the difference. Rich countries, whose own wealth derives from decades of cheap energy, can hardly preach restraint to developing nations trying to climb the wealth ladder. And without the obvious solution — massive investment in nuclear energy — continued warming looks unstoppable.

Campaigners for cutting greenhouse emissions try to scare us by proclaiming that a warmer world is a worse world. My dangerous idea is that it probably won't be. Some bad things will happen. For example, the sea level will rise, drowning some heavily populated or fertile coastal areas. But in compensation Siberia may become the world's breadbasket. Some deserts may expand, but others may shrink. Some places will get drier, others wetter. The evidence that the world will be worse off overall is flimsy. What is certainly the case is that we will have to adjust, and adjustment is always painful. Populations will have to move. In 200 years some currently densely populated regions may be deserted. But the population movements over the past 200 years have been dramatic too. I doubt if anything more drastic will be necessary. Once it dawns on people that, yes, the world really is warming up and that, no, it doesn't imply Armageddon, then the international agreements like the Kyoto protocol will fall apart.

The idea of giving up the global warming struggle is dangerous because it shouldn't have come to this. Mankind does have the resources and the technology to cut greenhouse gas emission. What we lack is the political will. People pay lip service to environmental responsibility, but they are rarely prepared to put their money where their mouth is. Global warming may turn out to be not so bad after all, but many other acts of environmental vandalism are manifestly reckless: the depletion of the ozone layer, the destruction of rain forests, the pollution of the oceans. Giving up on global warming will set an ugly precedent.

The idea of promoting dangerous ideas seems dangerous to me. I spend considerable effort to prevent my ideas from becoming dangerous, except, that is, to entrenched false beliefs and to myself. For instance, my idea that bad feelings are useful for our genes upends much conventional wisdom about depression and anxiety. I find, however, that I must firmly restrain journalists who are eager to share the sensational but incorrect conclusion that depression should not be treated. Similarly, many people draw dangerous inferences from my work on Darwinian medicine. For example, just because fever is useful does not mean that it should not be treated. I now emphasize that evolutionary theory does not tell you what to do in the clinic, it just tells you what studies need to be done.

I also feel obligated to prevent my ideas from becoming dangerous on a larger scale. For instance, many people who hear about Darwinian medicine assume incorrectly that it implies support for eugenics. I encourage them to read history as well as my writings. The record shows how quickly natural selection was perverted into Social Darwinism, an ideology that seemed to justify letting poor people starve. Related ideas keep emerging. We scientists have a responsibility to challenge dangerous social policies incorrectly derived from evolutionary theory. Racial superiority is yet another dangerous idea that hurts real people. More examples come to mind all too easily and some quickly get complicated. For instance, the idea that men are inherently different from women has been used to justify discrimination, but the idea that men and women have identical abilities and preferences may also cause great harm.

While I don't want to promote ideas dangerous to others, I am fascinated by ideas that are dangerous to anyone who expresses them. These are "unspeakable ideas." By unspeakable ideas I don't mean those whose expression is forbidden in a certain group. Instead, I propose that there is class of ideas whose expression is inherently dangerous everywhere and always because of the nature of human social groups. Such unspeakable ideas are anti-memes. Memes, both true and false, spread fast because they are interesting and give social credit to those who spread them. Unspeakable ideas, even true important ones, don't spread at all, because expressing them is dangerous to those who speak them.

So why, you may ask, is a sensible scientist even bringing the idea up? Isn't the idea of unspeakable ideas a dangerous idea? I expect I will find out. My hope is that a thoughtful exploration of unspeakable ideas should not hurt people in general, perhaps won't hurt me much, and might unearth some long-neglected truths.

Generalizations cannot substitute for examples, even if providing examples is risky. So, please gather your own data. Here is an experiment. The next time you are having a drink with an enthusiastic fan for your hometown team, say "Well, I think our team just isn't very good and didn't deserve to win." Or, moving to more risky territory, when your business group is trying to deal with a savvy competitor, say, "It seems to me that their product is superior because they are smarter than we are." Finally, and I cannot recommend this but it offers dramatic data, you could respond to your spouse's difficulties at work by saying, "If they are complaining about you not doing enough, it is probably because you just aren't doing your fair share." Most people do not need to conduct such social experiments to know what happens when such unspeakable ideas are spoken.

Many broader truths are equally unspeakable. Consider, for instance, all the articles written about leadership. Most are infused with admiration and respect for a leader's greatness. Much rarer are articles about the tendency for leadership positions to be attained by power-hungry men who use their influence to further advance their self-interest. Then there are all the writings about sex and marriage. Most of them suggest that there is some solution that allows full satisfaction for both partners while maintaining secure relationships. Questioning such notions is dangerous, unless you are a comic, in which case skepticism can be very, very funny.

As a final example, consider the unspeakable idea of unbridled self-interest. Someone who says, "I will only do what benefits me," has committed social suicide. Tendencies to say such things have been selected against, while those who advocate goodness, honesty and service to others get wide recognition. This creates an illusion of a moral society that then, thanks to the combined forces of natural and social selection, becomes a reality that makes social life vastly more agreeable.

There are many more examples, but I must stop here. To say more would either get me in trouble or falsify my argument. Will I ever publish my "Unspeakable Essays?" It would be risky, wouldn't it?

Serotonin-enhancing antidepressants (such as Prozac and many others) can jeopardize feelings of romantic love, feelings of attachment to a spouse or partner, one's fertility and one's genetic future.

I am working with psychiatrist Andy Thomson on this topic. We base our hypothesis on patient reports, fMRI studies, and other data on the brain.

Foremost, as SSRIs elevate serotonin they also suppress dopaminergic pathways in the brain. And because romantic love is associated with elevated activity in dopaminergic pathways, it follows that SSRIs can jeopardize feelings of intense romantic love. SSRIs also curb obsessive thinking and blunt the emotions--central characteristics of romantic love. One patient described this reaction well, writing: "After two bouts of depression in 10 years, my therapist recommended I stay on serotonin-enhancing antidepressants indefinitely. As appreciative as I was to have regained my health, I found that my usual enthusiasm for life was replaced with blandness. My romantic feelings for my wife declined drastically. With the approval of my therapist, I gradually discontinued my medication. My enthusiasm returned and our romance is now as strong as ever. I am prepared to deal with another bout of depression if need be, but in my case the long-term side effects of antidepressants render them off limits".

SSRIs also suppress sexual desire, sexual arousal and orgasm in as many as 73% of users. These sexual responses evolved to enhance courtship, mating and parenting. Orgasm produces a flood of oxytocin and vasopressin, chemicals associated with feelings of attachment and pairbonding behaviors. Orgasm is also a device by which women assess potential mates. Women do not reach orgasm with every coupling and the "fickle" female orgasm is now regarded as an adaptive mechanism by which women distinguish males who are willing to expend time and energy to satisfy them. The onset of female anorgasmia may jeopardize the stability of a long-term mateship as well.

Men who take serotonin-enhancing antidepressants also inhibit evolved mechanisms for mate selection, partnership formation and marital stability. The penis stimulates to give pleasure and advertise the male's psychological and physical fitness; it also deposits seminal fluid in the vaginal canal, fluid that contains dopamine, oxytocin, vasopressin, testosterone, estrogen and other chemicals that most likely influence a female partner's behavior.

These medications can also influence one's genetic future. Serotonin increases prolactin by stimulating prolactin releasing factors. Prolactin can impair fertility by suppressing hypothalamic GnRH release, suppressing pituitary FSH and LH release, and/or suppressing ovarian hormone production. Clomipramine, a strong serotonin-enhancing antidepressant, adversely affects sperm volume and motility.

I believe that Homo sapiens has evolved (at least) three primary, distinct yet overlapping neural systems for reproduction. The sex drive evolved to motivate ancestral men and women to seek sexual union with a range of partners; romantic love evolved to enable them to focus their courtship energy on a preferred mate, thereby conserving mating time and energy; attachment evolved to enable them to rear a child through infancy together. The complex and dynamic interactions between these three brain systems suggest that any medication that changes their chemical checks and balances is likely to alter an individual's courting, mating and parenting tactics, ultimately affecting their fertility and genetic future.

The reason this is a dangerous idea is that the huge drug industry is heavily invested in selling these drugs; millions of people currently take these medications worldwide; and as these drugs become generic, many more will soon imbibe — inhibiting their ability to fall in love and stay in love. And if patterns of human love subtlely change, all sorts of social and political atrocities can escalate.

Few economists expect the Kyoto Accords to attain their goals. With compliance coming only slowly and with three big holdouts — the US, China and India — it seems unlikely to make much difference in overall carbon dioxide increases. Yet all the political pressure is on lessening our fossil fuel burning, in the face of fast-rising demand.

This pits the industrial powers against the legitimate economic aspirations of the developing world — a recipe for conflict.

Those who embrace the reality of global climate change mostly insist that there is only one way out of the greenhouse effect — burn less fossil fuel, or else. Never mind the economic consequences. But the planet itself modulates its atmosphere through several tricks, and we have little considered using most of them. The overall global problem is simple: we capture more heat from the sun than we radiate away. Mostly this is a good thing, else the mean planetary temperature would hover around freezing. But recent human alterations of the atmosphere have resulted in too much of a good thing.

Two methods are getting little attention: sequestering carbon from the air and reflecting sunlight.

Hide the Carbon

There are several schemes to capture carbon dioxide from the air: promote tree growth; trap carbon dioxide from power plants in exhausted gas domes; or let carbon-rich organic waste fall into the deep oceans. Increasing forestation is a good, though rather limited, step. Capturing carbon dioxide from power plants costs about 30% of the plant output, so it's an economic nonstarter.

That leaves the third way. Imagine you are standing in a ripe Kansas cornfield, staring up into a blue summer sky. A transparent acre-area square around you extends upwards in an air-filled tunnel, soaring all the way to space. That long tunnel holds carbon in the form of invisible gas, carbon dioxide — widely implicated in global climate change. But how much?

Very little, compared with how much we worry about it. The corn standing as high as an elephant's eye all around you holds four hundred times as much carbon as there is in man-made carbon dioxide — our villain — in the entire column reaching to the top of the atmosphere. (We have added a few hundred parts per million to our air by burning.) Inevitably, we must understand and control the atmosphere, as part of a grand imperative of directing the entire global ecology. Yearly, we manage through agriculture far more carbon than is causing our greenhouse dilemma.

Take advantage of that. The leftover corn cobs and stalks from our fields can be gathered up, floated down the Mississippi, and dropped into the ocean, sequestering it. Below about a kilometer depth, beneath a layer called the thermocline, nothing gets mixed back into the air for a thousand years or more. It's not a forever solution, but it would buy us and our descendents time to find such answers. And it is inexpensive; cost matters.

The US has large crop residues. It has also ignored the Kyoto Accord, saying it would cost too much. It would, if we relied purely on traditional methods, policing energy use and carbon dioxide emissions. Clinton-era estimates of such costs were around $100 billion a year — a politically unacceptable sum, which led Congress to reject the very notion by a unanimous vote.

But if the US simply used its farm waste to "hide" carbon dioxide from our air, complying with Kyoto's standard would cost about $10 billion a year, with no change whatsoever in energy use.

The whole planet could do the same. Sequestering crop leftovers could offset about a third of the carbon we put into our air.

The carbon dioxide we add to our air will end up in the oceans, anyway, from natural absorption, but not nearly quickly enough to help us.

Reflect Away Sunlight

Hiding carbon from air is only one example of ways the planet has maintained its perhaps precarious equilibrium throughout billions of years. Another is our world's ability to edit sunlight, by changing cloud cover.

As the oceans warm, water evaporates, forming clouds. These reflect sunlight, reducing the heat below — but just how much depends on cloud thickness, water droplet size, particulate density — a forest of detail.

If our climate starts to vary too much, we could consider deliberately adjusting cloud cover in selected areas, to offset unwanted heating. It is not actually hard to make clouds; volcanoes and fossil fuel burning do it all the time by adding microscopic particles to the air. Cloud cover is a natural mechanism we can augment, and another area where possibility of major change in environmental thinking beckons.

A 1997 US Department of Energy study for Los Angeles showed that planting trees and making blacktop and rooftops lighter colored could significantly cool the city in summer. With minimal costs that get repaid within five years we can reduce summer midday temperatures by several degrees. This would cut air conditioning costs for the residents, simultaneously lowering energy consumption, and lessening the urban heat island effect. Incoming rain clouds would not rise as much above the heat blossom of the city, and so would rain on it less. Instead, clouds would continue inland to drop rain on the rest of Southern California, promoting plant growth. These methods are now under way in Los Angeles, a first experiment.

We can combine this with a cloud-forming strategy. Producing clouds over the tropical oceans is the most effective way to cool the planet on a global scale, since the dark oceans absorb the bulk of the sun's heat. This we should explore now, in case sudden climate changes force us to act quickly.

Yet some environmentalists find all such steps suspect. They smack of engineering, rather than self-discipline. True enough — and that's what makes such thinking dangerous, for some.

Yet if Kyoto fails to gather momentum, as seems probable to many, what else can we do? Turn ourselves into ineffectual Mommy-cop states, with endless finger-pointing politics, trying to equally regulate both the rich in their SUVs and Chinese peasants who burn coal for warmth? Our present conventional wisdom might be termed The Puritan Solution — Abstain, sinners! — and is making slow, small progress. The Kyoto Accord calls for the industrial nations to reduce their carbon dioxide emissions to 7% below the 1990 level, and globally we are farther from this goal every year.

These steps are early measures to help us assume our eventual 21st Century role, as true stewards of the Earth, working alongside Nature. Recently Billy Graham declared that since the Bible made us stewards of the Earth, we have a holy duty to avert climate change. True stewards use the Garden's own methods.

In his December 10, 1950, Nobel Prize acceptance speech, William Faulkner said:

I decline to accept the end of man. It is easy enough to say that man is immortal simply because he will endure: that when the last ding-dong of doom has clanged and faded from the last worthless rock hanging tideless in the last red and dying evening, that even then there will still be one more sound: that of his puny inexhaustible voice, still talking. I refuse to accept this. I believe that man will not merely endure: he will prevail.

He is immortal, not because he alone among creatures has an inexhaustible voice, but because he has a soul, a spirit capable of compassion and sacrifice and endurance. The poet's, the writer's, duty is to write about these things. It is his privilege to help man endure by lifting his heart, by reminding him of the courasge and honor and hope and pride and compassion and pity and sacrifice which have been the glory of his past. The poet's voice need not merely be the record of man, it can be one of the props, the pillars to help him endure and prevail.

It's easy to dismiss such optimism. The reason I hope Faulkner was right, however, is that we are at a turning point in history. For the first time, our technologies are not so much aimed outward at modifying our environment in the fashion of fire, clothes, agriculture, cities and space travel. Instead, they are increasingly aimed inward at modifying our minds, memories, metabolisms, personalities and progeny. If we can do all that, then we are entering an era of engineered evolution — radical evolution, if you will — in which we take control of what it will mean to be human.

This is not some distant, science-fiction future. This is happening right now, in our generation, on our watch. The GRIN technologies — the genetic, robotic, information and nano processes — are following curves of accelerating technological change the arithmetic of which suggests that the last 20 years are not a guide to the next 20 years. We are more likely to see that magnitude of change in the next eight. Similarly, the amount of change of the last half century, going back to the time when Faulkner spoke, may well be compressed into the next 14.

This raises the question of where we will gain the wisdom to guide this torrent, and points to what happens if Faulkner was wrong. If we humans are not so much able to control our tools, but instead come to be controlled by them, then we will be heading into a technodeterminist future.

You can get different versions of what that might mean.

Some would have you believe that a future in which our creations eliminate the ills that have plagued mankind for millennia — conquering pain, suffering, stupidity, ignorance and even death — is a vision of heaven. Some even welcome the idea that someday soon, our creations will surpass the pitiful limitations of Version 1.0 humans, themselves becoming a successor race that will conquer the universe, and care for us benevolently.

Others feel strongly that a life without suffering is a life without meaning, reducing humankind to ignominious, character-less husks. They also point to what could happen if such powerful self-replicating technologies get into the hands of bumblers or madmen. They can easily imagine a vision of hell in which we wipe out not only our species, but all of life on earth.

If Faulkner is right, however, there is a third possible future. That is the one that counts on the ragged human convoy of divergent perceptions, piqued honor, posturing, insecurity and humor once again wending its way to glory. It puts a shocking premium on Faulkner's hope that man will prevail "because he has a soul, a spirit capable of compassion and sacrifice and endurance." It assumes that even as change picks up speed, giving us less and less time to react, we will still be able to rely on the impulse that Churchill described when he said, "Americans can always be counted on to do the right thing—after they have exhausted all other possibilities."

The key measure of such a "prevail" scenario's success would be an increasing intensity of links between humans, not transistors. If some sort of transcendence is achieved beyond today's understanding of human nature, it would not be through some individual becoming superman. Transcendence would be social, not solitary. The measure would be the extent to which many transform together.

The very fact that Faulkner's proposition looms so large as we look into the future does at least illuminate the present.

Referring to Faulkner's breathtaking line, "when the last ding-dong of doom has clanged and faded from the last worthless rock hanging tideless in the last red and dying evening, that even then there will still be one more sound: that of his puny inexhaustible voice, still talking," the author Bruce Sterling once told me, "You know, the most interesting part about that speech is that part right there, where William Faulkner, of all people, is alluding to H. G. Wells and the last journey of the Traveler from The Time Machine. It's kind of a completely heartfelt, probably drunk mishmash of cornball crypto-religious literary humanism and the stark, bonkers, apocalyptic notions of atomic Armageddon, human extinction, and deep Darwinian geological time. Man, that was the 20th century all over."

Media violence induces imitative violence. If true, this idea is dangerous for at least two main reasons. First, because its implications are highly relevant to the issue of freedom of speech. Second, because it suggests that our rational autonomy is much more limited than we like to think. This idea is especially dangerous now, because we have discovered a plausible neural mechanism that can explain why observing violence induces imitative violence. Moreover, the properties of this neural mechanism — the human mirror neuron system — suggest that imitative violence may not always be a consciously mediated process. The argument for protecting even harmful speech (intended in a broad sense, including movies and videogames) has typically been that the effects of speech are always under the mental intermediation of the listener/viewer. If there is a plausible neurobiological mechanism that suggests that such intermediate step can be by-passed, this argument is no longer valid.

For more than 50 years behavioral data have suggested that media violence induces violent behavior in the observers. Meta-data show that the effect size of media violence is much larger than the effect size of calcium intake on bone mass, or of asbestos exposure to cancer. Still, the behavioral data have been criticized. How is that possible? Two main types of data have been invoked. Controlled laboratory experiments and correlational studies assessing types of media consumed and violent behavior. The lab data have been criticized on the account of not having enough ecological validity, whereas the correlational data have been criticized on the account that they have no explanatory power. Here, as a neuroscientist who is studying the human mirror neuron system and its relations to imitation, I want to focus on a recent neuroscience discovery that may explain why the strong imitative tendencies that humans have may lead them to imitative violence when exposed to media violence.

Mirror neurons are cells located in the premotor cortex, the part of the brain relevant to the planning, selection and execution of actions. In the ventral sector of the premotor cortex there are cells that fire in relation to specific goal-related motor acts, such as grasping, holding, tearing, and bringing to the mouth. Surprisingly, a subset of these cells — what we call mirror neurons — also fire when we observe somebody else performing the same action. The behavior of these cells seems to suggest that the observer is looking at her/his own actions reflected by a mirror, while watching somebody else's actions. My group has also shown in several studies that human mirror neuron areas are critical to imitation. There is also evidence that the activation of this neural system is fairly automatic, thus suggesting that it may by-pass conscious mediation. Moreover, mirror neurons also code the intention associated with observed actions, even though there is not a one-to-one mapping between actions and intentions (I can grasp a cup because I want to drink or because I want to put it in the dishwasher). This suggests that this system can indeed code sequences of action (i.e., what happens after I grasp the cup), even though only one action in the sequence has been observed.

Some years ago, when we still were a very small group of neuroscientists studying mirror neurons and we were just starting investigating the role of mirror neurons in intention understanding, we discussed the possibility of super mirror neurons. After all, if you have such a powerful neural system in your brain, you also want to have some control or modulatory neural mechanisms. We have now preliminary evidence suggesting that some prefrontal areas have super mirrors. I think super mirrors come in at least two flavors. One is inhibition of overt mirroring, and the other one — the one that might explain why we imitate violent behavior, which require a fairly complex sequence of motor acts — is mirroring of sequences of motor actions. Super mirror mechanisms may provide a fairly detailed explanation of imitative violence after being exposed to media violence.

From Copernicus to Darwin to Freud, science has a special way of deflating human hubris by proposing what is frequently perceived, at the time, as dangerous or pernicious ideas. Today, cognitive neuroscience presents us with a new challenging idea, whose accommodation will require substantial personal and societal effort — the discovery of the intrinsic limits of the human brain.

Calculation was one of the first domains where we lost our special status — right from their inception, computers were faster than the human brain, and they are now billions of times ahead of us in their speed and breadth of number crunching. Psychological research shows that our mental "central executive" is amazingly limited — we can process only one thought at a time, at a meager rate of five or ten per second at most. This is rather surprising. Isn't the human brain supposed to be the most massively parallel machine on earth? Yes, but its architecture is such that the collective outcome of this parallel organization, our mind, is a very slow serial processor. What we can become aware of is intrinsically limited. Whenever we delve deeply into the processing of one object, we become literally blind to other items that would require our attention (the "attentional blink" paradigm). We also suffer from an "illusion of seeing": we think that we take in a whole visual scene and see it all at once, but research shows that major chunks of the image can be changed surreptitiously without our noticing.

True, relative to other animal species, we do have a special combinatorial power, which lies at the heart of the remarkable cultural inventions of mathematics, language, or writing. Yet this combinatorial faculty only works on the raw materials provided by a small number of core systems for number, space, time, emotion, conspecifics, and a few other basic domains. The list is not very long — and within each domain, we are now discovering lots of little ill-adapted quirks, evidence of stupid design as expected from a brain arising from an imperfect evolutionary process (for instance, our number system only gives us a sense of approximate quantity — good enough for foraging, but not for exact mathematics). I therefore do not share Marc Hauser's optimism that our mind has a "universal" or "limitless" expressive power. The limits are easy to touch in mathematics, in topology for instance, where we struggle with the simplest objects (is a curve a knot… or not?).

As we discover the limits of the human brain, we also find new ways to design machines that go beyond those limits. Thus, we have to get ready for a society where, more and more, the human mind will be replaced by better computers and robots — and where the human operator will be increasingly considered a nuisance rather than an asset. This is already the case in aeronautics, where flight stability is ensured by fast cybernetics and where landing and take off will soon be assured by computer, apparently with much improved safety.

There are still a few domains where the human brain maintains an apparent superiority. Visual recognition used to be one — but already, superb face recognition software is appearing, capable of storing and recognizing thousands of faces with close to human performance. Robotics is another. No robot to date is capable of navigating smoothly through a complicated 3-D world. Yet a third area of human superiority is high-level semantics and creativity: the human ability to make sense of a story, to pull out the relevant knowledge from a vast store of potentially useful facts, remains unequalled.