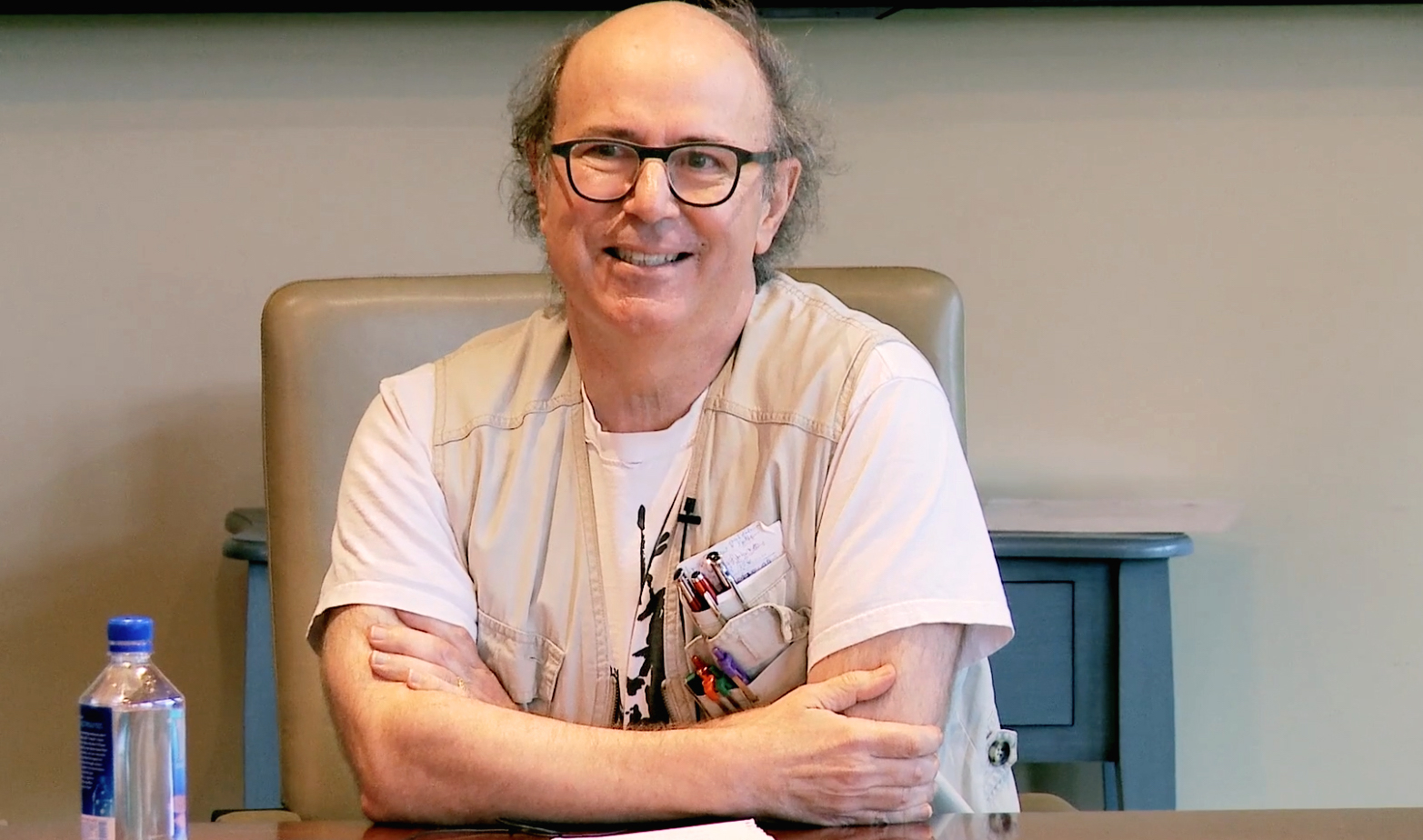

Mary Catherine Bateson

1939–2021

Introduction

by John Brockman

From the early days of Edge, Catherine Bateson was the gift that kept giving. Beginning in 1998, with her response to “What Questions Are You Asking Yourself?” through “The Last Question” in 2018, she exemplified the role of the Third Culture intellectual: “those scientists and other thinkers in the empirical world who, through their work and expository writing, are taking the place of the traditional intellectual in rendering visible the deeper meanings of our lives, redefining who and what we are.”

Her Edge essays over the years focused on subjects as varied as "ecology and culture," systems thinking, cybernetics, metaphor, gender, climate, schismogenesis (i.e., positive feedback), the nature of side-effects, among others, and are evidence of a keen and fearless intellect determined to advance science-based thinking as well as her own controversial ideas.

She once told me that "It turns out that the Greek religious system is a way of translating what you know about your sisters, and your cousins, and your aunts into knowledge about what’s happening to the weather, the climate, the crops, and international relations, all sorts of things. A metaphor is always a framework for thinking, using knowledge of this to think about that. Religion is an adaptive tool, among other things. It is a form of analogic thinking."

"We carry an analog machine around with us all the time called our body," she said. "It’s got all these different organs that interact; they’re interdependent. If one of them goes out of kilter, the others go out of kilter, eventually. This is true in society. This is how disease spreads through a community, because everything is connected."

She used methods from systems theory to explore "how people think about complex wholes like the ecology of the planet, or the climate, or large populations of human beings that have evolved for many years in separate locations and are now re-integrating.”

"Until fairly recently," she noted, "artificial intelligence didn’t learn. To create a machine that learns to think more efficiently was a big challenge. One of the things that I wonder about is how we'll be able to teach a machine to know what it doesn’t know that it might need to know in order to address a particular issue productively and insightfully. This is a huge problem for human beings. It takes a while for us to learn to solve problems, and then it takes even longer for us to realize what we don’t know that we would need to know to solve a particular problem."

Mary Catherine Bateson was present at ground zero of the cybernetic revolution, the home of her parents, Margaret Mead and Gregory Bateson.

As a child, I had the early conversations of the cybernetic revolution going on around me. I can look at examples and realize that when one of my parents was trying to teach me something, it was directly connected with what they were doing and thinking about in the context of cybernetics.

One of my favorite memories of my childhood was my father helping me set up an aquarium. In retrospect, I understand that he was teaching me to think about a community of organisms and their interactions, interdependence, and the issue of keeping them in balance so that it would be a healthy community. That was just at the beginning of our looking at the natural world in terms of ecology and balance. Rather than itemizing what was there, I was learning to look at the relationships and not just separate things.

Bless his heart, he didn’t tell me he was teaching me about cybernetics. I think I would have walked out on him. Another way to say it is that he was teaching me to think about systems. Gregory coined the term "schismogenesis" in 1936, from observing the culture of a New Guinea tribe, the Iatmul, in which there was a lot of what he called schismogenesis. Schismogenesis is now called "positive feedback"; it’s what happens in an arms race. You have a point of friction, where you feel threatened by, say, another nation. So, you get a few more tanks. They look at that and say, "They’re arming against us," and they get a lot more tanks. Then you get more tanks. And they get more tanks or airplanes or bombs, or whatever it is. That’s positive feedback.

At the beginning of the war, my parents, Margaret Mead and Gregory Bateson, had very recently met and married. They met Lawrence K. Frank, who was an executive of the Macy Foundation. As a result of that, both of them were involved in the Macy Conferences on Cybernetics, which continued then for twenty years. They still quote my mother constantly in talking about second-order cybernetics: the cybernetics of cybernetics. They refer to Gregory as well, though he was more interested in cybernetics as abstract analytical techniques. My mother was more interested in how we could apply this to human relations.

My parents looked at the cybernetics conferences rather differently. My mother, who initially posed the concept of the cybernetics of cybernetics, second-order cybernetics, came out of the anthropological approach to participant observation: How can you do something and observe yourself doing it? She was saying, "Okay, you’re inventing a science of cybernetics, but are you looking at your process of inventing it, your process of publishing, and explaining, and interpreting?" One of the problems in the United States has been that pieces of cybernetics have exploded into tremendous economic activity in all of computer science, but much of the systems theory side of cybernetics has been sort of a stepchild. I firmly believe that it is the systems thinking that is critical.

At the point where she said, "You guys need to look at what you’re doing. What is the cybernetics of cybernetics?" what she was saying was, "Stop and look at your own process and understand it." Eventually, I suppose you do run into the infinite recursion problem, but I guess you get used to that.

How do you know that you know what you know? When I think about the excitement of those early years of the cybernetic conferences, there have been several losses. One is that the explosion of devices and manufacturing and the huge economic effect of computer technology has overshadowed the epistemological curiosity on which it was built, of how we know what we know, and how that affects decision making.

She expressed concern that one her favorite topics, "ecology and culture," was "most commonly taught in terms of the way a given environment determines the possible cultural patterns that human societies develop to adapt to that environment, sometimes also in terms of the impact of a given human community. What I was mainly focusing on, however, came out of work that I'd done with my father, namely the relationship between the ideas, the beliefs, the understandings, and so on, of a group of people, and the way they impact their environment."

Bateson was a prolific author who wrote seven books, co-authored two, and recently completed a new book, Love Across Difference, scheduled for publication in 2022. Her writing is notable for the way in which she purged abstractions from her books to make way for stories, almost always focused on women, and who were often not known to the public. This allowed her stories to carry the kernel of the ideas. "And in the process" she said, "the ideas become more nuanced, less cut and dried."

"Famous people are interesting," she said, "but there's a kind of a distancing phenomenon there. I'm interested in the creativity that we all put into our lives. Picasso's life story is not empowering to the creativity of ordinary people. What is empowering is looking at someone that they can identify with. And becoming aware of what they're already doing."

Given her interest in storytelling, I once asked her "what's the purpose of asking people to make that jump, instead of directly addressing the more general questions?"

"People learn from stories in a different way than the way they learn from generalities," she replied. "When I'm writing, I often start out with abstractions and academic jargon and purge it. The red pencil goes through page after page while I try to make sure that the stories and examples remain to carry the kernel of the ideas, and in the process the ideas become more nuanced, less cut and dried. Sometimes reviewers seem to want the abstractions back, but I figure that if they were able to recognize what's being said, it didn't have to be spelled out or dressed up in pretentious technical language."

"I am concerned about the state of the world," she told me the last time we sat down together in 2018.

One of the things that we’re all seeing is that a lot of work that has been done to enable international cooperation in dealing with various problems since World War II is being pulled apart. We’re seeing the progress we thought had been made in this country in race relations being reversed. We’re seeing the partial breakup—we don’t know how far that will go—of a united Europe. We’re moving ourselves back several centuries in terms of thinking about what it is to be human, what it is to share the same planet, how we’re going to interact and communicate with each other. We’re going to be starting from scratch pretty soon.

One of the most essential elements of human wisdom at its best is humility, knowing that you don’t know everything," she said. There’s a sense in which we haven’t learned how to build humility into our interactions with our devices. The computer doesn’t know what it doesn’t know, and it's willing to make projections when it hasn’t been provided with everything that would be relevant to those projections. How do we get there? I don’t know. It’s important to be aware of it, to realize that there are limits to what we can do with AI. It’s great for computation and arithmetic, and it saves huge amounts of labor. It seems to me that it lacks humility, lacks imagination, and lacks humor. It doesn’t mean you can’t bring those things into your interactions with your devices, particularly, in communicating with other human beings. But it does mean that elements of intelligence and wisdom—I like the word wisdom, because it's more multi-dimensional—are going to be lacking.

In this special edition of Edge, we honor the memory of Mary Catherine Bateson by taking a deep dive into her ideas through her conversations, her essays, and her responses over a twenty year period to the Edge Annual Question. Read on at the link below. Mary Catherine Bateson's Edge Bio

—JB

Editor, Edge