|

THE $100,000 EDGE OF COMPUTATION SCIENCE PRIZE

|

For individual scientific work, extending the computational idea, performed, published, or newly applied within the past ten years.

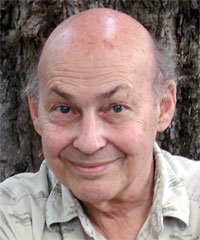

David Deutsch

Recipient of the 2005

$100,000 Edge of Computation Science Prize

DAVID DEUTSCH is the founder of the field of quantum computation. Paul Benioff, Richard Feynman, and others had written about the possibility of quantum computation earlier, but Deutsch's 1985 paper on Quantum Turing Machines was the first full treatment of the subject, and the Deutsch-Jozsa algorithm is the first quantum algorithm.

When he first proposed it, quantum computation seemed practically impossible. But the last decade has seen an explosion in the construction of simple quantum computers and quantum communication systems. None of this would have taken place without Deutsch's work.

The nominating essay is reproduced in part below.

Although the general idea of a quantum computer had been proposed earlier by Richard Feynman, in 1985 David Deutsch wrote the key paper which proposed the idea of a quantum computer and initiated the study of how to make one. Since then he has continued to be a pioneer and a leader in a rapidly growing field that is now called quantum information science.

Presently, small quantum computers are operating in laboratories around the world, and the race is on to find a scalable implementation that, if successful, will revolutionize the technologies of computation and communications. It is fair to say that no one deserves recognition for the growing success of this field more than Deutsch, for his ongoing work as well as for his founding paper. Among his key contributions in the last ten years are a paper with Ekert and Jozsa on quantum logic gates, and a proof of universality in quantum computation, with Barenco and Ekert (both in 1995).

One reason to nominate Deutsch for this prize is that he has always aimed to expand our understanding of the notion of computation in the context of the deepest questions in the foundations of mathematics and physics. Thus, his pioneering work in 1985 was motivated by interest in the Church-Turing thesis. Much of his recent work is motivated by his interest in the foundations of quantum mechanics, as we see from his 1997 book.

ABOUT DAVID DEUTSCH

The main papers written by Deutsch that contained "achievement in scientific work that embodies extensions of the computational idea" were in 1985 ("Quantum theory, the Church-Turing principle, and the universal quantum computer") and 1989 ("Quantum computational networks").

His 1995 paper, "Conditional quantum dynamics and logic gates" (with A. Barenco, A. Ekert and R. Jozsa) was an important step in clarifying what sort of physical processes would be needed to implement quantum computation in the laboratory, and what sort of things the experimentalists should be trying to get to work.

"Universality in quantum computation," also written in 1995 (with A. Barenco and A. Ekert) proved the universality of almost all 2-qubit quantum gates, thus verifying his conjecture made in 1989 and showing that quantum computation and quantum gate operations are "built in" to quantum physics far more deeply than classical physics. In 1996, in "Quantum privacy amplification and the security of quantum cryptography over noisy channels" (with A. Ekert, R. Jozsa, C. Macchiavello, S. Popescu and A. Sanpera), he brought quantum cryptography a little bit closer to being practical as opposed to just a laboratory curiosity.

His recent work as seen in the following three papers can be seen as new "applications" of the computational idea, rather than extensions of it.

In 2000, "Information Flow in Entangled Quantum Systems" (with P. Hayden) refutes the long-held belief that quantum systems contain 'non-local' effects, and it does it by appealing to the universality of quantum computational networks, and analysing information flow in those.

Also in 2000, in "Machines, Logic and Quantum Physics" (with A. Ekert and R. Lupacchini), a philosophic paper, not a scientific one, he appealed to the existence of a distinctive quantum theory of computation to argue that our knowledge of mathematics is derived from, and is subordinate to, our knowledge of physics (even though mathematical truth is independent of physics).

In 2002, he answered several long-standing questions about the multiverse interpretation of quantum theory in "The Structure of the Multiverse" — in particular, what sort of structure a "universe" is, within the multiverse. It does this by using the methods of the quantum theory of computation to analyse information flow in the multiverse.

His two main lines of research at the moment, qubit field theory and quantum constructor theory, may well yield important extensions of the computational idea eventually, but at the moment neither of them has yielded any results at all, to speak of, only promising avenues of research.

Born in Haifa, Israel, David Deutsch was educated at Cambridge and Oxford universities. After several years at the University of Texas at Austin, he returned to Oxford, where he now lives and works. Since 1999, he has been a non-stipendiary Visiting Professor of Physics at the University of Oxford, where he is a member of the Centre for Quantum Computation at the Clarendon Laboratory, Oxford University.

In 1998 he was awarded the Institute of Physics' Paul Dirac Prize and Medal. This is the Premier Award for theoretical physics within the gift of the Council of the Institute of Physics. It is made for "outstanding contributions to theoretical (including mathematical and computational) physics." In 2002 he received the Fourth International Award on Quantum Communication for "theoretical work on Quantum Computer Science."

He is the author of The Fabric of Reality [1997].

References:

"Quantum Theory, The Church-Turing Principle, and the Universal Quantum Computer," Proc. Roy. Soc. London A400, 97-117 (1985)

" Quantum computational networks" Proceedings of the Royal Society of London A425:73-90. (1989)

"Conditional quantum dynamics and logic gates" (with A. Barenco, A. Ekert and R. Jozsa) Phys. Rev. Lett. 74 4083-6 (1995)

"Universality in quantum computation" (with A. Barenco and A. Ekert) Proc. R. Soc. Lond. A449 669-77 (1995)

"Quantum privacy amplification and the security of quantum cryptography over noisy channels" (with A. Ekert, R. Jozsa, C. Macchiavello, S. Popescu and A. Sanpera) Phys. Rev. Lett. 77 2818-21 (1996)

"Information Flow in Entangled Quantum Systems" (with P. Hayden) Proc. R. Soc. Lond. A456 1759-1774 (2000)

"Machines, Logic and Quantum Physics" (with A. Ekert and R. Lupacchini) Bulletin of Symbolic Logic 3 3 (2000)

"The Structure of the Multiverse" Proc. R. Soc. Lond.A458 2028 2911-23 (2002)

David Deutsch's Edge bio page