EDGE: LIVE, IN LONDON

This year's collaboration with the Serpentine Gallery in London was part of the "Extinction Marathon: Visions of the Future" event, which will took place in the Serpentine Sackler Gallery's extension, designed by Zaha Hadid, on Oct. 18th. The entire event which was live-streamed, will be presented on Edge.

An EDGE Conversation: "DE-EXTINCTION": Stewart Brand & Richard Prum

with Hans Ulrich Obrist & John Brockman

Does the prospect of "de-extinction" change how we think about extinction? Conservation science is shifting from being species-centric to function-centric, focussing on the overall health of ecosystems. Does the extinction of a species leave a "gap in nature" that can only be filled by returning the species to life and to the wild? Or will a functionally close relative serve? Is a de-extincted species really nothing more than a functionally close relative anyway? If it is too difficult and expensive to revive every extinct species, what are the criteria for deciding which ones to work on? Humans are the ones deciding. What ethics and aesthetics should guide those decisions?

STEWART BRAND is the Founder of the "The Whole Earth Catalog" and Co-founder of The Long Now Foundation and Revive and Restore; Author, Whole Earth Discipline.

Stewart Brand's Edge Bio Page

RICHARD PRUM is an Evolutionary Ornithologist at Yale University, where he is the Curator of Ornithology and Head Curator of Vertebrate Zoology in the Yale Peabody Museum of Natural History. He is working on a book about duck sex, aesthetic evolution, and the origin of beauty.

Richard Prum's Edge Bio Page

"EDGIES ON EXTINCTION": 10 Minute talks by Helena Cronin, Jennifer Jacquet, Steve Jones, and Chiara Marletto, and an EDGE discussion joined by Molly Crockett, Hans Ulrich Obrist, and John Brockman.

I dream about the sea cow or imagine what they would be like to see in the wild, but the case of the Pinta Island giant tortoise was a particularly strange feeling for me personally because I had spent many afternoons in the Galapagos Islands when I was a volunteer with the Sea Shepherd Conservation Society in Lonesome George’s den with him. If any of you visited the Galapagos, you know that you can even feed the giant tortoises that are in the Charles Darwin Research Station. This is Lonesome George here.

He lived to a ripe old age but failed, as they pointed out many times, to reproduce. Just recently, in 2012, he died, and with him the last of his species. He was couriered to the American Museum of Natural History and taxidermied there. A couple weeks ago his body was unveiled. This was the unveiling that I attended, and at this exact moment in time I can say that I was feeling a little like I am now: nervous and kind of nauseous, while everyone else seemed calm. I wasn’t prepared to see Lonesome George. Here he is taxidermied, looking out over Central Park, which was strange as well. At that moment realized that I knew the last individual of this species to go extinct. That presents this strange predicament for us to be in in the 21st century—this idea of conspicuous extinction. [Continue...]

JENNIFER JACQUET is an Assistant Professor of Environmental Studies at NYU researching cooperation and the tragedy of the commons; Author, Is Shame Necessary? Jennifer Jacquet's Edge Bio Page

~ ~ ~ ~

There is a new fundamental theory of physics that’s called constructor theory, and was proposed by David Deutsch who pioneered the theory of the universe of quantum computer. David and I are working this theory together. The fundamental idea in this theory is that we formulate all laws of physics in terms of what tasks are possible, what are impossible, and why. In this theory we have an exact physical characterization of an object that has those properties, and we call that knowledge. Note that knowledge here means knowledge without knowing the subject, as in the theory of knowledge of the philosopher, Karl Popper.

We’ve just come to the conclusion that the fact that extinction is possible means that knowledge can be instantiated in our physical world. In fact, extinction is the very process by which that knowledge is disabled in its ability to remain instantiated in physical systems because there are problems that it cannot solve. With any luck that bit of knowledge can be replaced with a better one. [Continue...]

CHIARA MARLETTO is a Junior Research Fellow at Wolfson College and Postdoctoral Research Assistnat at the Materials Department at the University of Oxford. Chiara Marletto's Edge Bio Page

~ ~ ~ ~

What I wanted to talk about is somewhat of a parallel of that in human populations. If you were to go to a textbook on human biology from the time of Darwin or a bit later, you would certainly get an image that looked a bit like this. This is an image of the so-called races of humankind—racial types, as they called them. I’m not going to go into the question of whether there are real races of humankind because there aren’t. It’s interesting to note that until quite recently people assumed, and scientists assumed too, that the human species was divided into distinct groups that were biologically different from each other and had been isolated from each other for a long, long time.

Well, to some extent that was true. Until quite recently, human populations were isolated from each other. That’s changing quite quickly. [Continue...]

STEVE JONES is a Professor of Genetics at the Galton Laboratory of University College London; Author, The Lanugage of the Genes. Steve Jones's Edge Bio Page

~ ~ ~ ~

... A strange thing happened on the way to a better world in pursuit of an admirable quest, that is, a world free of sex discrimination where you’re judged on your own qualities and not your sex. Truth and falsity went topsy-turvy. The truth—the silence of sex differences—became dangerous, unmentionable, and in its place the conventional wisdom, which is a ragbag of ideas that have long been extinct but are kept ghoulishly alive by popularity, became the entrenched orthodoxy influencing public thinking, agendas and policy-making, and completely crowding out science and sense.

My aim is to show you why the current orthodoxy should be abandoned and why, if you really care about a fairer world, the science does matter. It matters profoundly. I’m going to take two examples, both about the professions, because they very well epitomize the orthodox litany: how society systematically discriminates against women, and how at work they are victims of pervasive sexism. [Continue...]

HELENA CRONIN is the Co-Director of LSE's Centre for Philosophy of Natural and Social Science; Author, The Ant and the Peacock: Altruism and Sexual Selection from Darwin to Today. Helena Cronin's Edge Bio Page

~ ~ ~ ~

MOLLY CROCKETT is an Associate Professor in the Department of Experimental Psychology at the University of Oxford; Wellcome Trust Postdoctoral Fellow at the Wellcome Trust Centre for Neuroimaging. Molly Crockett's Edge Bio Page

HANS ULRICH OBRIST is the Co-director of the Serpentine Gallery in London; Author, Ways of Curating. Hans Ulrich Obrist's Edge Bio Page

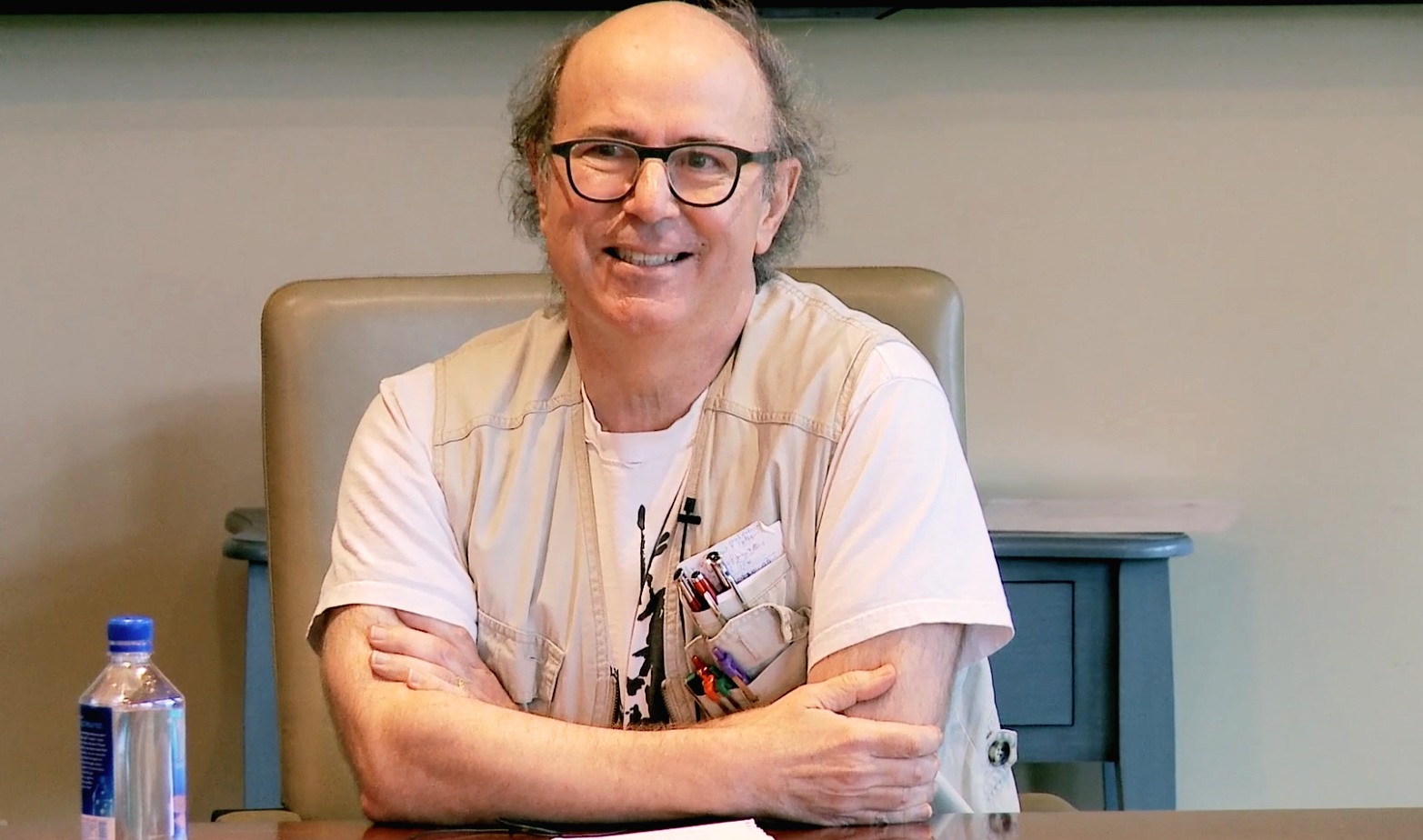

JOHN BROCKMAN is the Editor and Publisher of Edge.org; Chairman of Brockman, Inc.; Author, By the Late John Brockman, The Third Culture. John Brockman's Edge Bio Page

EDGE & SERPENTINE GALLERY

Previous Edge-Serpentine collaborations have included:

"Formulae for the 21st Century" (2007)

"The Table-Top Experiment Marathon" (2007)

"Maps For The 21st Century" (2010)

"Information Gardens" (2011)

SPEAKING OF EXTINCTIONS....

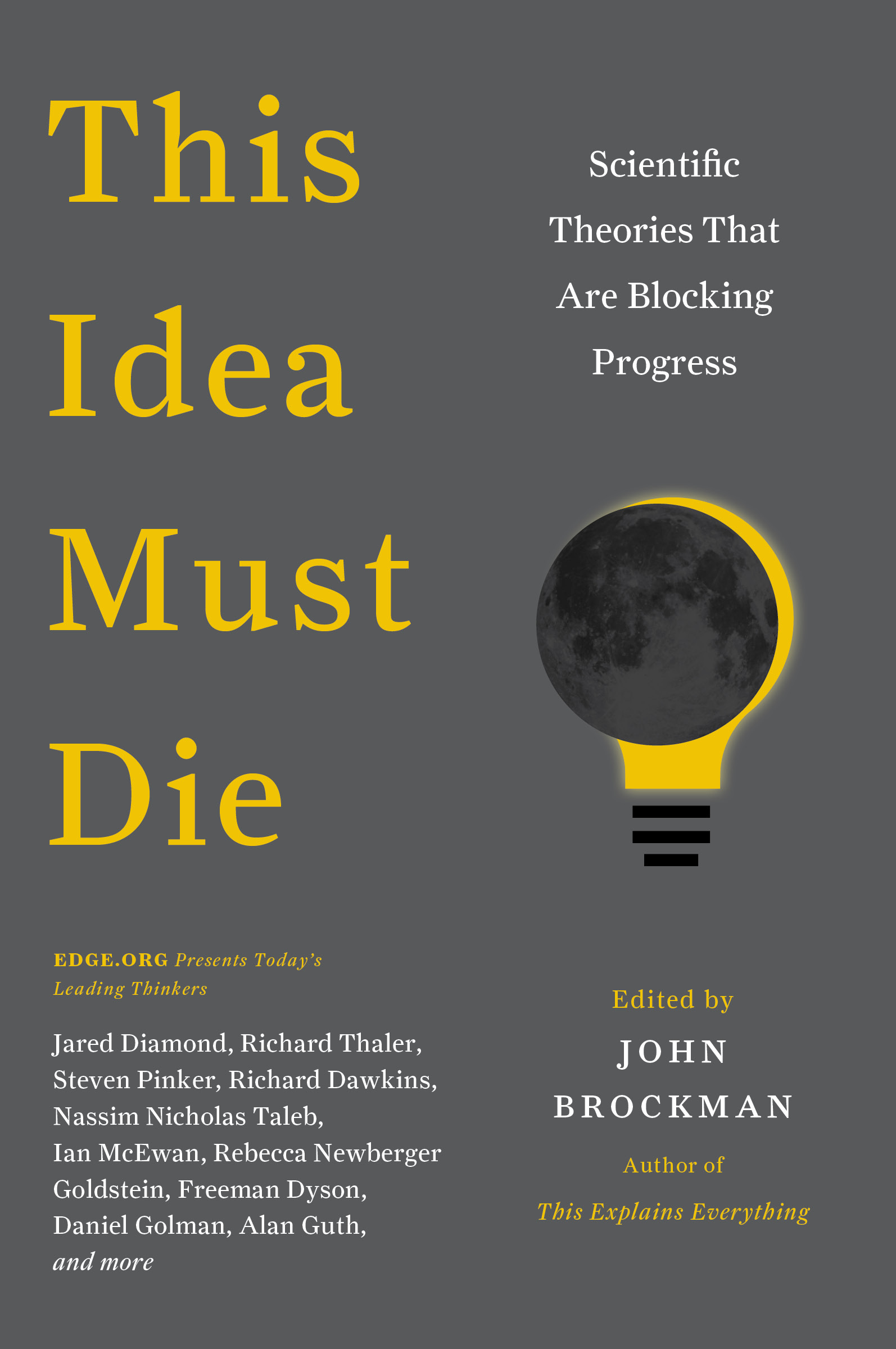

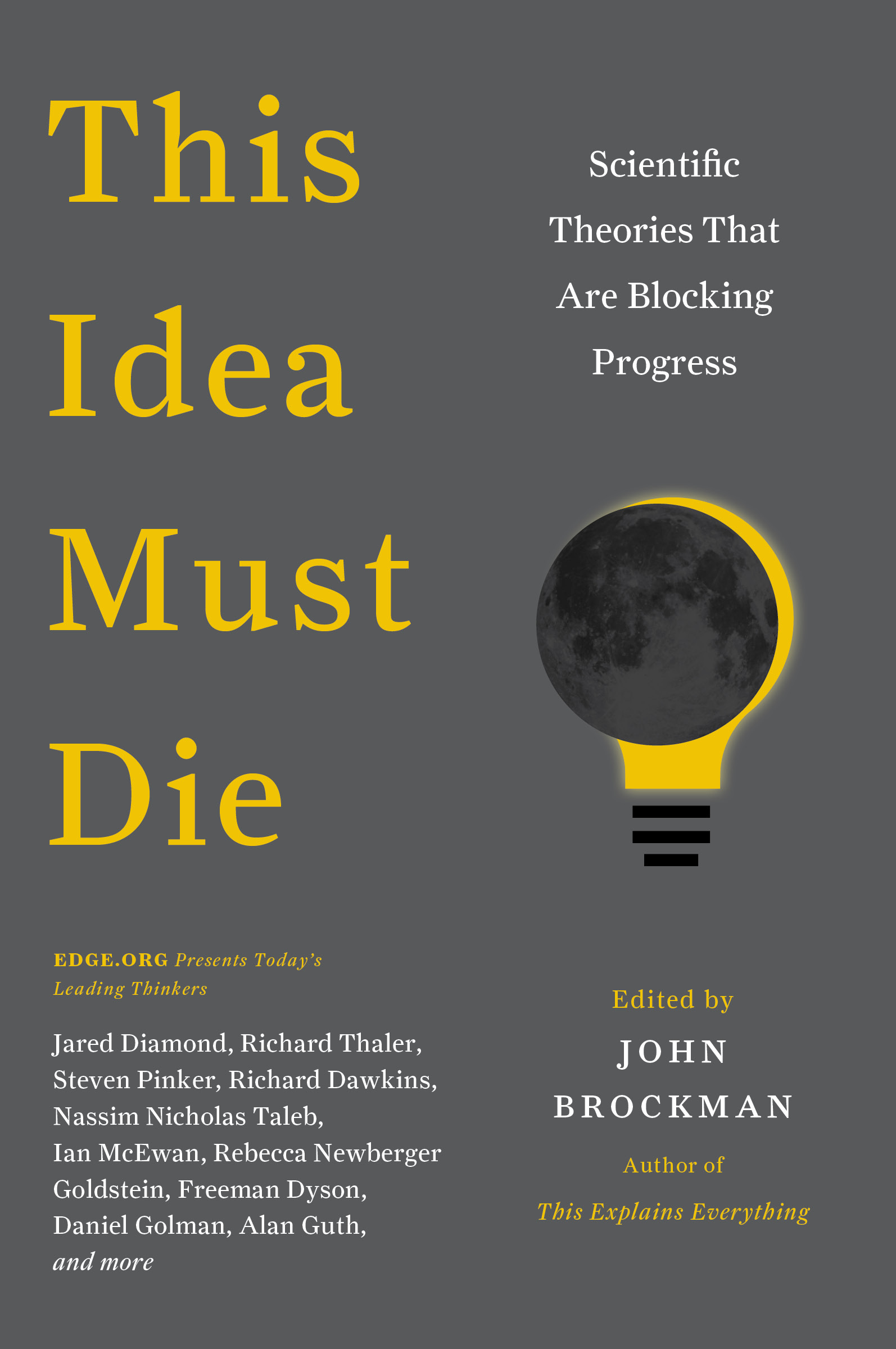

Edge's own contribution to the conversation will be published in February: