THE HILLIS KNOWLEDGE WEB [1]

In May, 2004, Edge published W. Daniel "Danny" Hillis's essay "'Aristotle': The Knowledge Web" , in which he noted:

...humanity's accumulated store of information will become more accessible, more manageable, and more useful. Anyone who wants to learn will be able to find the best and the most meaningful explanations of what they want to know. Anyone with something to teach will have a way to reach those who what to learn. Teachers will move beyond their present role as dispensers of information and become guides, mentors, facilitators, and authors. The knowledge web will make us all smarter. The knowledge web is an idea whose time has come.

In his essay, Hillis asked the Edge community to begin a conversation and a number of people who think deeply about such matters participated: Douglas Rushkoff, Marc D. Hauser, Stewart Brand, Jim O'Donnell, Jaron Lanier, Bruce Sterling, Roger Schank, George Dyson, Howard Gardner, Seymour Papert, Freeman Dyson, Esther Dyson, Kai Krause, ans Pamela McCorduck.

In 2005, George Dyson noted in his prescient essay Turing's Cathedral [2]:

My visit to Google? Despite the whimsical furniture and other toys, I felt I was entering a 14th-century cathedral not in the 14th century but in the 12th century, while it was being built. Everyone was busy carving one stone here and another stone there, with some invisible architect getting everything to fit. The mood was playful, yet there was a palpable reverence in the air. "We are not scanning all those books to be read by people," explained one of my hosts after my talk. "We are scanning them to be read by an AI."

When I returned to highway 101, I found myself recollecting the words of Alan Turing, in his seminal paper Computing Machinery and Intelligence, a founding document in the quest for true AI. "In attempting to construct such machines we should not be irreverently usurping His power of creating souls, any more than we are in the procreation of children," Turing had advised. "Rather we are, in either case, instruments of His will providing mansions for the souls that He creates."

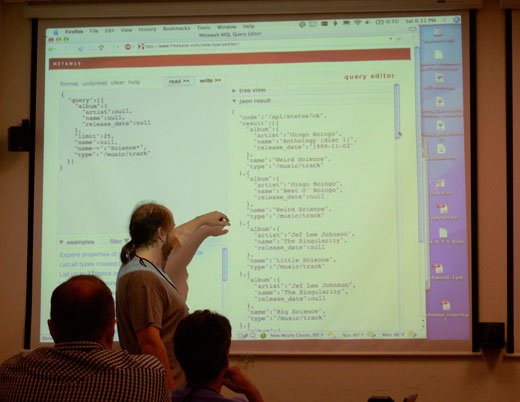

In March, 2007, Hillis announced a new company called "Metaweb", and the free database, Freebase.com, and he wrote second Edge essay: "Addendum to 'Aristotle' (The Knowledge Web)." He wrote:

In retrospect the key idea in the "Aristotle" essay was this: if humans could contribute their knowledge to a database that could be read by computers, then the computers could present that knowledge to humans in the time, place and format that would be most useful to them. The missing link to make the idea work was a universal database containing all human knowledge, represented in a form that could be accessed, filtered and interpreted by computers.

One might reasonably ask: Why isn't that database the Wikipedia or even the World Wide Web? The answer is that these depositories of knowledge are designed to be read directly by humans, not interpreted by computers. They confound the presentation of information with the information itself. The crucial difference of the knowledge web is that the information is represented in the database, while the presentation is generated dynamically. Like Neal Stephenson's storybook, the information is filtered, selected and presented according to the specific needs of the viewer.

Danny Hillis at SciFoo at the Googleplex, July 2007

Last week, buried the the news on a summer Friday afternoon, was the announcement that Google had acquired Metaweb.

It all began with the technological breakthroughs in the realm of massively parallel computers and their associated algorithms. Credit for this goes to Hillis who is primarily responsible for having broken through the von Neumann bottleneck of the serial computer.

At MIT in the late seventies, Hillis built his "connection machine," a computer that makes use of integrated circuits and, in its parallel operations, closely reflects the workings of the human mind. In 1983, he spun off a computer company called Thinking Machines, which built the world's fastest supercomputer by utilizing parallel architecture.

Hillis's computers, which were fast enough to simulate the process of evolution itself, showed that programs of random instructions can, by competing, produce new generations of programs — an approach that led to the creation of his Knowledge Web. Hillis's work demonstrates that when systems are not engineered but instead allowed to evolve "to build themselves" then the resultant whole is greater than the sum of its parts. Simple entities working together produce some complex thing that transcends them; the implications for biology, engineering, and physics have been, and will increasingly be, enormous.

Philosopher Daniel C. Dennett noted that with the idea of a massively parallel architecture, which would be capable of exploring a different part of the space of possible computations, Hillis opened up a vast area:

What the British mathematician Alan Turing did, with the concept of the Turing machine, was to provide a succinct definition of the entire space of all possible computations. The machine developed by John von Neumann was a mechanical realization of Turing's idea. A von Neumann machine is the computer on your desk — the standard serial computer. In principle, the von Neumann machine ” which is, for all practical purposes, a universal Turing machine ” can compute any computable function; but if you don't have a billion years to wait around, you can't actually explore interesting parts of that space. The actual space explorable by any one architecture is quite limited. It sends this vanishingly thin thread out into this huge multidimensional space. To explore other parts of that space, you have got to invent other kinds of architecture. Massive parallel architectures are everybody's first, second, and third choices.

What Danny did was to create if not the first then one of the first really practical, really massive, parallel computers. It precipitated a gold rush. We had a new exploration vehicle, which was looking at portions of design space that had never been looked at before. Danny was very good at selling that idea to people in different scientific fields and demonstrating, with some of the early applications, just how powerful and exciting this vehicle was.

Two years ago this month, Hillis instigated an interesting Edge Reality Club conversation cross-referenced with a discussion on the Encyclopedia Britannica website on Nicholas Carr's Atlantic Essay "Is Google Making Us Stupid" (now expanded into Carr's book The Shallows). Hillis wrote:

We evolved in a world where our survival depended on an intimate knowledge of our surroundings. This is still true, but our surroundings have grown. We are now trying to comprehend the global village with minds that were designed to handle a patch of savanna and a close circle of friends. Our problem is not so much that we are stupider, but rather that the world is demanding that we become smarter. Forced to be broad, we sacrifice depth. We skim, we summarize, we skip the fine print and, all too often, we miss the fine point. We know we are drowning, but we do what we can to stay afloat.

As an optimist, I assume that we will eventually invent our way out of our peril, perhaps by building new technologies that make us smarter, or by building new societies that better fit our limitations. In the meantime, we will have to struggle. Herman Melville, as might be expected, put it better: "well enough they know they are in peril; well enough they know the causes of that peril; nevertheless, the sea is the sea, and these drowning men do drown."

We create tools and then we mold ourselves in their image. With The Hillis Knowledge Web he has proposed something new, something different. I can make a case that his "Aristotle" (The Knowledge Web) essay is the kind of seminal document, such as Turing's Computing Machinery and Intelligence, and MuCulloch et al's What the Frog's Eye Tells the Frog's Brain that appears a few times in a century. But now, with the Google announcement, we will all find in Internet time, how his ideas play out in the real world.

Now is the time to revisit (in chronological order) Hillis's original 2004 essay

("'Aristotle': The Knowledge Web") [3], the ensuing Reality Club conversation [3], and his 2007 "Addendum to 'Aristotle'" [3], and have a conversation about where we are today regarding what I am taking the liberty of calling "The Hillis Knowledge Web".

— JB

For background reading on Hillis and his Knowledge Web, see:

Part V: "Something Beyond Ourselves [4]" in The Third Culture: Beyond The Cybernetic Revolution (1995)

"The Genius [5]" in Digerati: Encounters With The Cyber Elite (1996)

W. DANIEL (Danny) HILLIS is an inventor, scientist, engineer, author, and intellectual He pioneered the concept of parallel computers that is now the basis for most supercomputers, as well as the RAID disk array technology used to store large databases. He holds over 100 U.S. patents, covering parallel computers, disk arrays, forgery prevention methods, and various electronic and mechanical devices. He is also the designer of a 10,000-year mechanical clock.

Presently, he is Chairman and Chief Technology Officer of Applied Minds, Inc., a research and development company in Los Angeles, creating a range of new products and services in software, entertainment, electronics, biotechnology and mechanical design. The company also provides advanced technology, creative design and consulting services to a variety of clients.

Previously, Hillis was Vice President, Research and Development at Walt Disney Imagineering, and a Disney Fellow. He developed new technologies and business strategies for Disney's theme parks, television, motion pictures, Internet and consumer products businesses. He also designed new theme park rides, a full sized walking robot dinosaur and various micro mechanical devices.

W. Daniel Hillis's Edge Bio Page [6]